Doug Cutting, who was working at Yahoo! at the time, named it after his son's toy elephant.

Development started on the Apache Nutch project, but was moved to the new Hadoop subproject in January 2006. This paper spawned another one from Google – "MapReduce: Simplified Data Processing on Large Clusters". Other projects in the Hadoop ecosystem expose richer user interfaces.Īccording to its co-founders, Doug Cutting and Mike Cafarella, the genesis of Hadoop was the Google File System paper that was published in October 2003. Though MapReduce Java code is common, any programming language can be used with Hadoop Streaming to implement the map and reduce parts of the user's program. The Hadoop framework itself is mostly written in the Java programming language, with some native code in C and command line utilities written as shell scripts. Īpache Hadoop's MapReduce and HDFS components were inspired by Google papers on MapReduce and Google File System. The term Hadoop is often used for both base modules and sub-modules and also the ecosystem, or collection of additional software packages that can be installed on top of or alongside Hadoop, such as Apache Pig, Apache Hive, Apache HBase, Apache Phoenix, Apache Spark, Apache ZooKeeper, Apache Impala, Apache Flume, Apache Sqoop, Apache Oozie, and Apache Storm. Hadoop Ozone – (introduced in 2020) An object store for Hadoop.Hadoop MapReduce – an implementation of the MapReduce programming model for large-scale data processing.Hadoop YARN – (introduced in 2012) a platform responsible for managing computing resources in clusters and using them for scheduling users' applications.Hadoop Distributed File System (HDFS) – a distributed file-system that stores data on commodity machines, providing very high aggregate bandwidth across the cluster.Hadoop Common – contains libraries and utilities needed by other Hadoop modules.The base Apache Hadoop framework is composed of the following modules: This allows the dataset to be processed faster and more efficiently than it would be in a more conventional supercomputer architecture that relies on a parallel file system where computation and data are distributed via high-speed networking. This approach takes advantage of data locality, where nodes manipulate the data they have access to. It then transfers packaged code into nodes to process the data in parallel. Hadoop splits files into large blocks and distributes them across nodes in a cluster. The core of Apache Hadoop consists of a storage part, known as Hadoop Distributed File System (HDFS), and a processing part which is a MapReduce programming model. All the modules in Hadoop are designed with a fundamental assumption that hardware failures are common occurrences and should be automatically handled by the framework.

It has since also found use on clusters of higher-end hardware. Hadoop was originally designed for computer clusters built from commodity hardware, which is still the common use. It provides a software framework for distributed storage and processing of big data using the MapReduce programming model.

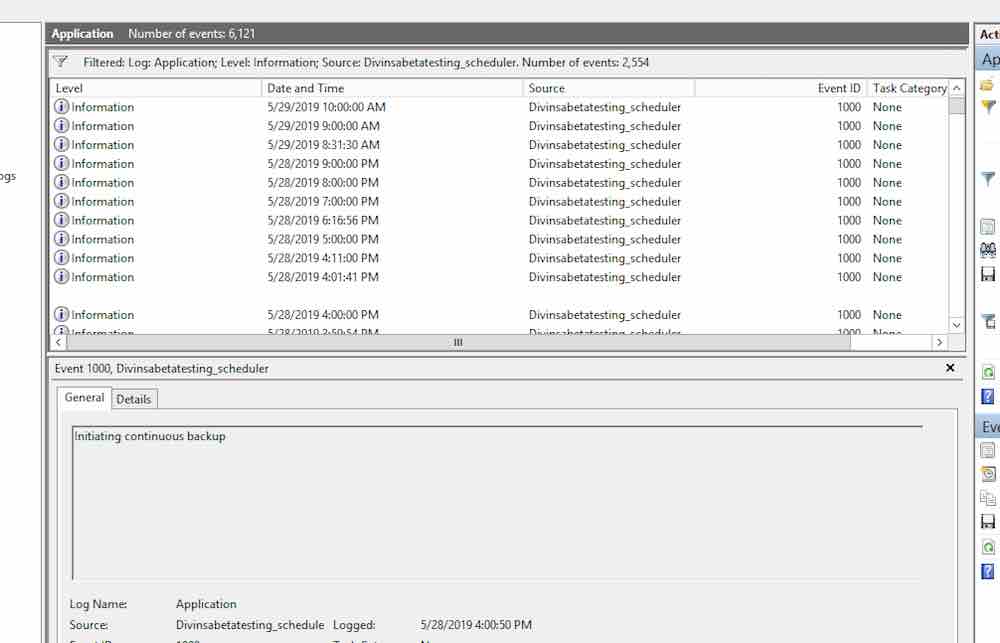

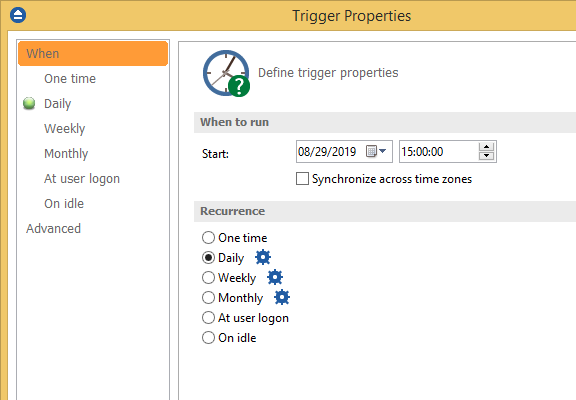

This can be found a few different ways but I am going to show what I consider the simplest way.2.10.2 / May 31, 2022 9 months ago ( ) ģ.2.4 / July 22, 2022 7 months ago ( ) ģ.3.4 / August 8, 2022 7 months ago ( ) Īpache Hadoop ( / h ə ˈ d uː p/) is a collection of open-source software utilities that facilitates using a network of many computers to solve problems involving massive amounts of data and computation. These devices can be purchased at just about any retail store who sells electronics such as Best Buy, Staples, or Walmart.įor this guide I am going to use the Windows Backup and Restore Tool. These usually come with all the necessary cables to connect to your computer. Some of the different types of storage are USB flash drives, portable USB external hard drives, desktop external hard drives, or a secondary internal hard drive within the computer. This can be done using a variety of storage options which come in a wide range of storage capacities. To complete a backup of files on a PC, some form of storage location will be needed. I've seen many people loose important documents, favorite music, or family photos which they can’t replace because they only had them on their computer with no form of backup. Working in IT I am constantly having to inform or remind people about the importance of backing up their files. The chosen location to store the backup must have enough free space or the process will not complete. Disclaimer: Since this process is just copying files there should be no ill effect to the computer.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed